- Phase 3 supported Routing Protocols:

- RIP

- EIGRP

- OSPF

- BGP

- ODR

- Preferred EIGRP and BGP

- Summarization options are better.

- Preferred EIGRP and BGP

- Phase 3 General

- mGRE on hub and spokes

- NHRP required for spoke registration to hub

- NHRP required for spoke to spoke resolution

- When a hub hairpins traffic over same interface:

- Sends NHRP redirect message back to packet source.

- Forwards original packet down to spoke via RIB.

- Routing

- Summarization/default routing to hub allowed.

- Results in NHRP routes for spoke to spoke tunnel.

- With no-summary, NHO performed for spoke to spoke tunnel.

- next hop is changed from hub IP to spoke IP.

- Next hop on spokes is always changed by the hub.

- Because of this, NHRP resolution is triggered by hub.

- Multi-level hierarchy works without daisy-chaining.

- Summarization/default routing to hub allowed.

- mGRE on hub and spokes

Configuration:

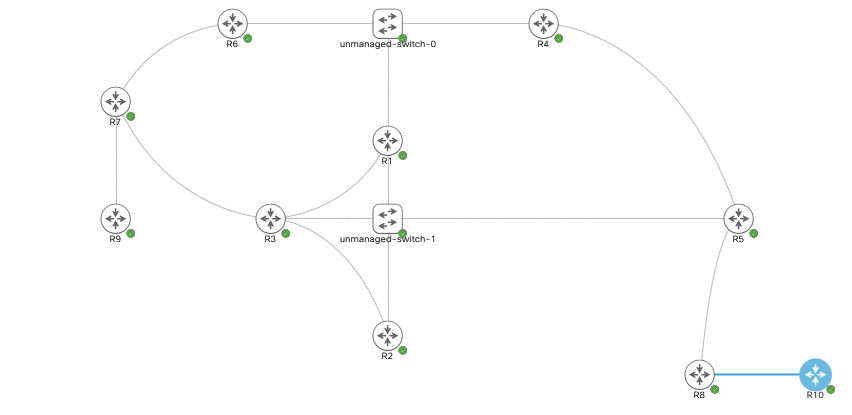

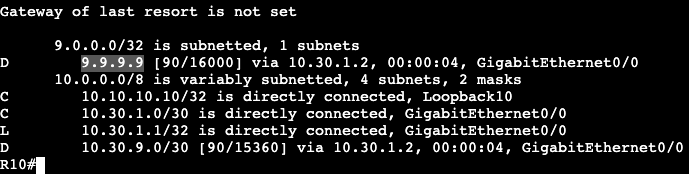

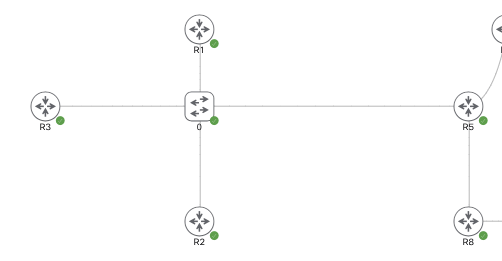

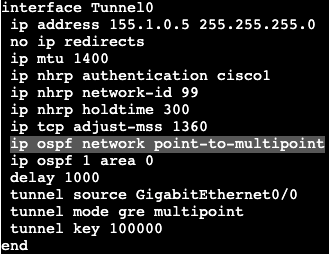

The topology currently is the one above, R1-R3 are DMVPN Phase 2 spokes and R5 is the hub. Each router is running OSPF over its tunnel 0 and advertising the loopback.

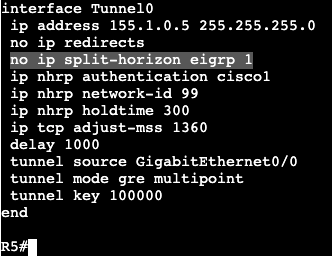

R5/Hub:

Physical IP – Gig0/0 – 96.76.43.137/29

VPN/Tunnel IP – Tu0 – 155.1.0.5

Loopback – L5 – 5.5.5.5/32

R1/Spoke:

Physical IP – Gig0/0 – 96.76.43.140/29

VPN/Tunnel IP – Tu0 – 155.1.0.1

Loopback – L1 – 1.1.1.1/32

R2/Spoke:

Physical IP – Gig0/0 – 96.76.43.138/29

VPN/Tunnel IP – Tu0 – 155.1.0.2

Loopback – L2 – 2.2.2.2/32

R3/Spoke:

Physical IP – Gig0/0 – 96.76.43.137/29

VPN/Tunnel IP – Tu0 – 155.1.0.3

Loopback – L3 – 3.3.3.3/32

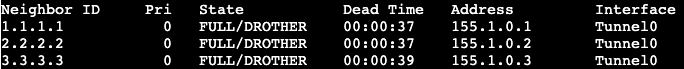

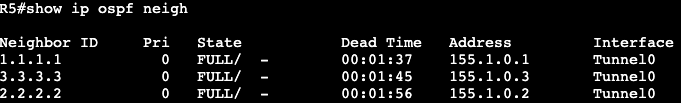

The OSPF network types are currently set to Broadcast with the hub as DR. If the network type on each tunnel interface is changed to ‘point-to-multipoint’, we’ll see that the DR/BDR process goes away.

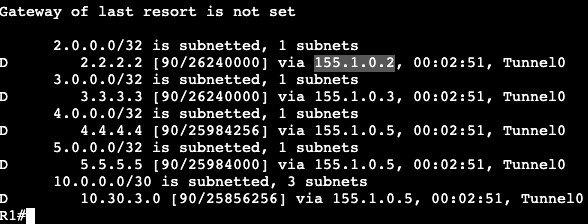

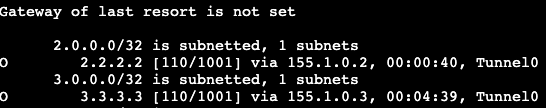

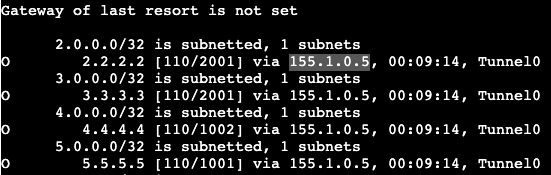

Now on a spoke if the routing table is shown, we’ll see that each spoke next hop hits the hub, unlike the broadcast network before. This is due to Next Hop Override not functioning yet.

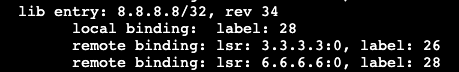

To enable NHO, we need to add some commands. On each spoke we’ll add ‘ip nhrp shortcut’, and the hub gets ‘ip nhrp redirect’. Both of these commands are added on the tunnel 0 interface, or in general the DMVPN tunnel interfaces.

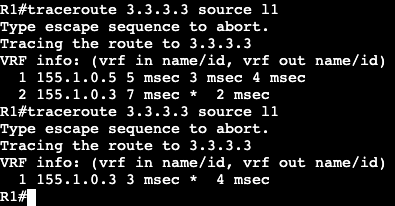

Now when doing a traceroute from R1 to R2 you can see that traffic first gets directed to the hub, then down to R2/spoke. If the traceroute is performed again though we’ll see the traffic goes directly from spoke to spoke.

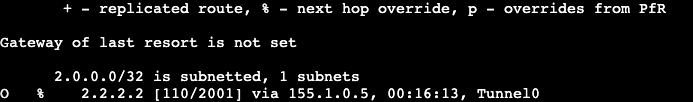

Looking at the routing table of R1 shows now a % sign next to the route on R1 to R2 – 2.2.2.2, which means next hop override is taking place. Next hop does not change in the routing table but it can be seen in the cef table.

EIGRP:

OSPF has pretty big limitations in this type of topology when it comes to route manipulation via areas and summarization. If a tool like summarization were to be used, it’s much better to use BGP or EIGRP.

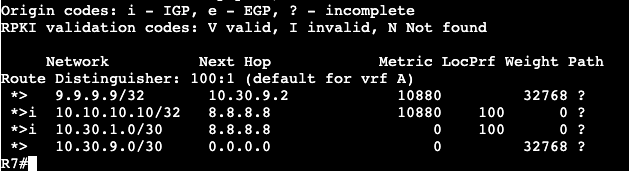

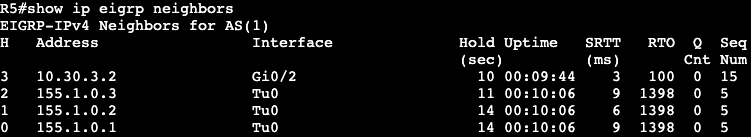

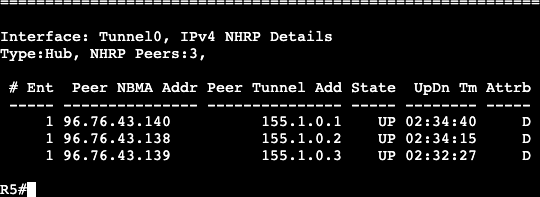

The above topology was changed to run EIGRP. We’re enabling all the same interfaces in the EIGRP process and there are three EIGRP DMVPN neighbors on the hub. Each spoke is receiving routes over the DMVPN tunnels.

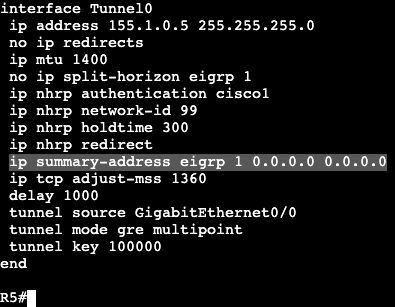

In the image directly above we’re reading the routing table for R1. Notice that it’s only receiving routes from the hub at R5. None of the routes from our other spokes, R2 and R3. That’s because the hub needs the command ‘no ip split-horizon eigrp 1’.

Once that’s added R1 begins receiving the routes from the other DMVPN spokes. The next hop does not change in Phase 3 however, that’s still the default behavior. In Phase 2 we’d add Next-Hop-Self, but that’s unnecessary in Phase 3 due to NHRP’s response that advises spokes to enable direct tunnels to each other.

With EIGRP enabled we can run a traceroute from R1 to R2 and we’ll see the first packet gets directed to the hub, R5. Running a traceroute again will show traffic is now going directly from spoke to spoke. In the routing table of R1 we’ll see that there’s a NHO occuring from the ‘%’, and in the cef table we’ll see that this is where that NHO is taking place.

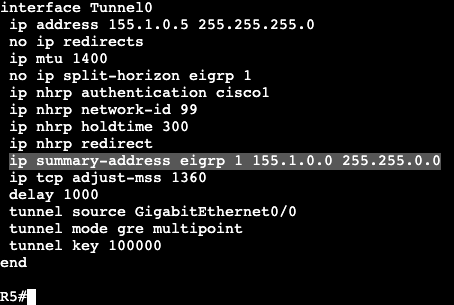

Now because this is EIGRP, we can easily do a summarization from the hub. On R5 we’ll enter the below commands and then look at a spoke routing table.

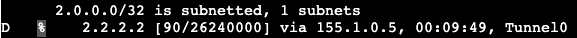

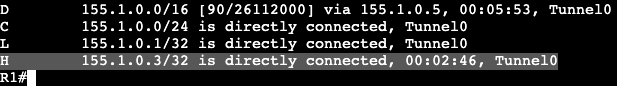

R1 Route Table:

Now from R1 a traceroute will be performed from R1’s loopback to R3’s loopback.

First try the packet hits the hub first. Second try it goes directly over the dynamic tunnel.

Now in the routing table of R1 a route labeled ‘H’ is visible, which means it’s from NHRP. When using summarization in DMVPN, NHRP will take care of the more specific routes when they’re needed – ie. when traffic is actually going from spoke to spoke.

Default Route:

This summarization can be done with a single default route as well. On the hub the summary address has been removed and a 0.0.0.0 0.0.0.0 has been added.

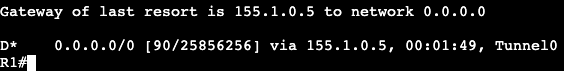

On a spoke the ‘show ip route eigrp’ now looks like below:

After pinging R2 (spoke) and looking at R1’s routing table again, we’ll see that NHRP has added another more specific route in our DMVPN topology.